- Blog

- Can i edit text on bluebeam for mac

- Lirik lagu barat lama gowes

- Set up branch office vpn watchguard

- Using kafka tool create dc1 to dc2

- Fl studio 12 producer edition free download crack

- Autocad student free download mac

- Uninstall latest update microsoft word mac

- Dgvoodoo 2 wrapper download

- Rte player sunday miscellany

- Ppsspp gta liberty city stories cheatdb

- Download usb 2-0 crw driver

- Code blocks download mac

- All people with superpowers in the new hellboy movie

- Infected mushroom heavyweight tabs

- Leostar astrology software

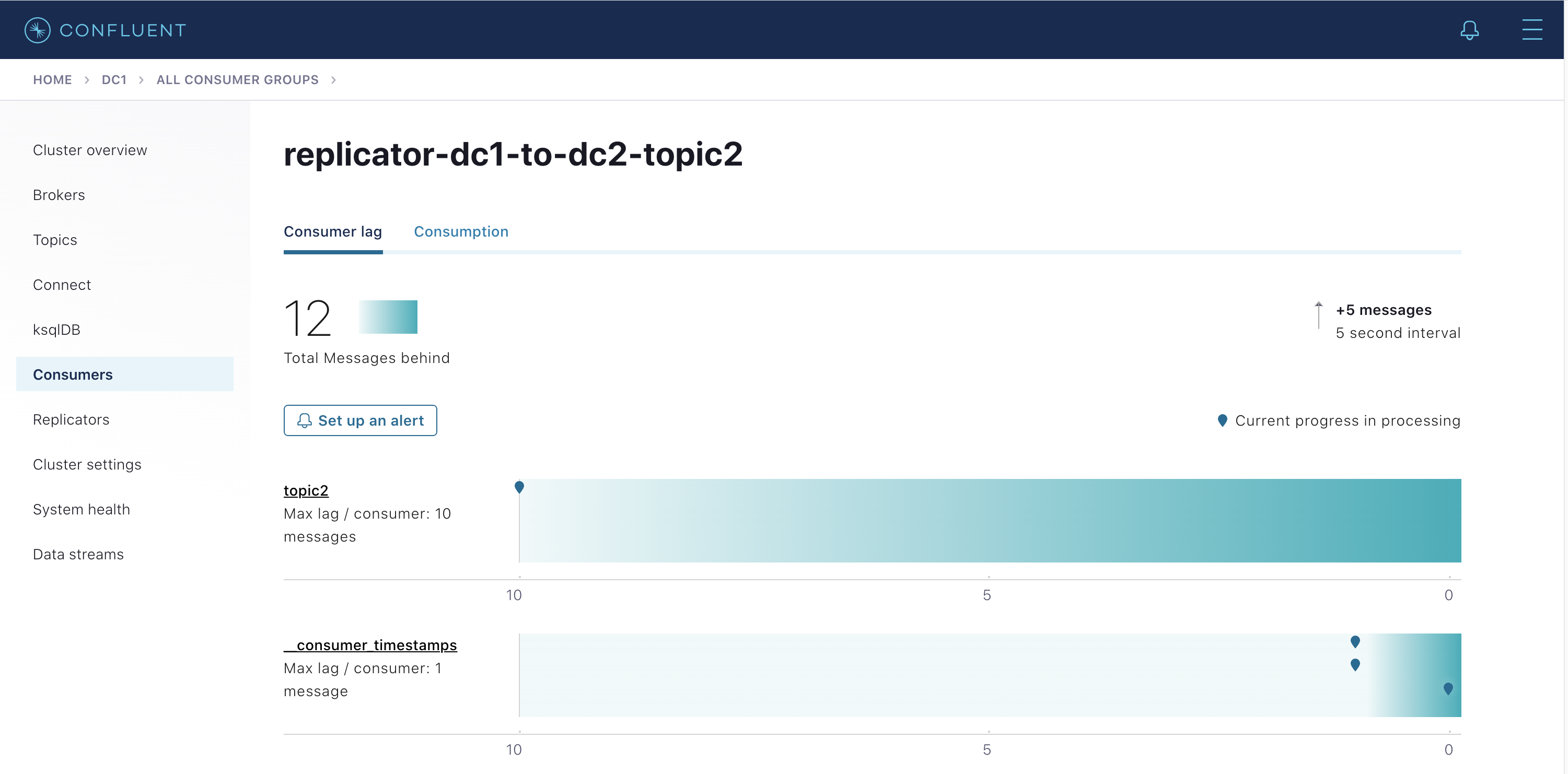

Replicator also supports between two data centers via a secure communications over SSL. Replicator can easily run up, but the need to ensure the correct installation and configuration to deploy and optimized according to their actual work environment. Topic filter layer: Replicator whitelist defined in the configuration file, the blacklist and regular.įilter Layer message: sources of information used in the message headers to automatically avoid copying cycle between two data centers. Once up and running, Replicator copy each filter allows a topic: You can interact with REST API and Kafka Connect, manage and check these connector: This Repicator will run in the DC-2 from the data copied to the DC-1 DC-2, DC-1 other Replicator operation will copy the data from the DC-2 DC-1. Then add the Replicator connector to Kafka Connect. You can use Confluent Control Center to make all Kafka connectors centralized management. In order to run Replicator in Kafka Connect cluster, you first need to initialize Kafka Connect, for scalability and fault tolerance will always use a distributed model in a production environment.

#USING KAFKA TOOL CREATE DC1 TO DC2 HOW TO#

This section describes how to cluster inside Kafka Connect Replicator as different connector to run. of value, and it does not require replication _schemas. There is a minimal Replicator configuration for the copy from the DC-2 DC-1, which is arranged above and is very similar, except that it has a different name and the consumer group ID, as well as its exchange and. Replicator The following configuration enables the use of message header information to avoid cyclical replication in the topic. If you use Confluent Schema Registry, this topic filter should also include this topic _schemas, but it only needs a one-way replication. We assume that there is a topic white list, which has a topic topic1, it has two data centers are configured. In dual main design, there is a minimized Replicator configuration for replication from the DC-1 to DC-2's. To simplify the design of dual master work, we will be configured =false to prohibit Replicator submit their consumer timestamps. However, in the two main design, it is not needed, because there are two separate Replicator run in both directions. In the new message from the master design, the DC-1 produced during downtime in the DC-2, you need to be copied back to the DC-1 and DC-1 after recovery, the Replicator will be used to restore service.

It offsets will be converted to the target cluster. It is noteworthy that, Confluent Replicator built himself a consumer, men default situation, will submit their consumer timestamps to the original cluster.

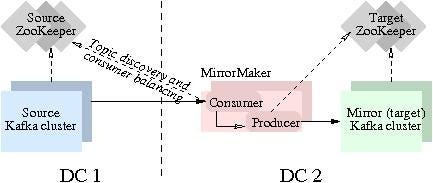

As shown below:īoth Replictor connector configuration parameters are different, their configuration is in the following table: A Replicator connector to copy data from the DC-1 to DC-2, another to copy data from the DC-2 DC-1. In dual main design, a two-way data is copied. The following figure shows the master - the DC-2, the data from the one-way replication Replicator connector design that runs from the DC-1 to DC-2. The Kafka Connect workers deployed in the same data center and target clusters. Confluent Replicator consume messages from the original cluster and then writes the message to the target cluster. Replicator inherits all the advantages of Kafka Connect API, including scalability, performance and fault tolerance. Multi-data center deployments Confuent Replicator Configuration RepicatorĬonfluent Replicator is a Kafka connector, which runs within the framework of Kafka Connect.